Optimization-inspired Deep Learning — Current Projects

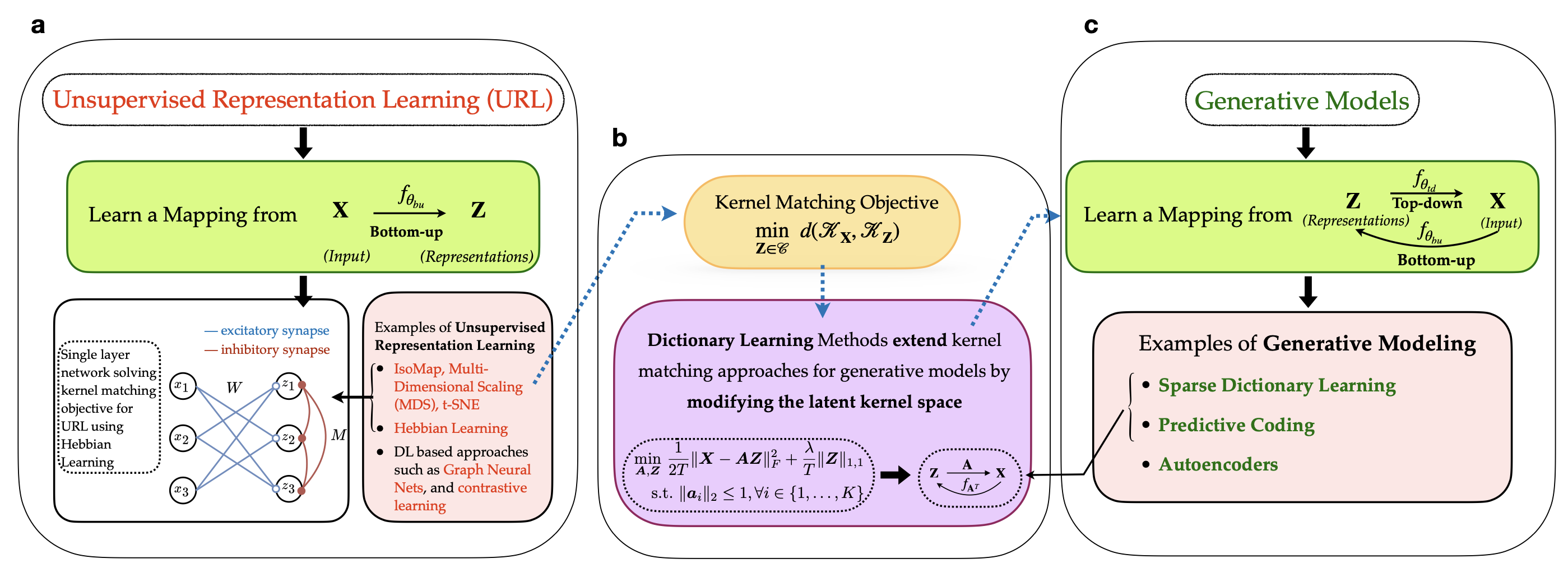

Implicit Generative Modeling by Kernel Similarity Matching

Brains don't just recognize patterns—they also predict what they will see. We propose a self-supervised framework that learns representations by aligning the similarity geometry of inputs with a learned latent space, and in doing so, reveals an implicit generative model that maps latent representations back to sensory data. Our key insight is an equivalence between kernel similarity matching objectives and dictionary learning, which lets us reinterpret representation learning as a bidirectional process: bottom-up encoding from stimuli to latents, coupled with top-down prediction from latents to stimuli. This perspective links modern similarity-based learning to biologically grounded ideas like predictive coding, offering a new route toward interpretable and neurally plausible representation learning.

Relevant papers

[1] Choudhary S., Masset P., Ba D. "Implicit generative modeling by kernel similarity matching", In Press, Neural Computation, 2026. [arxiv]

[2] Choudhary S., Masset P., Ba D. "Self supervised dictionary learning using kernel matching", IEEE International Workshop on Machine Learning for Signal Processing, 2024. [official]

Main researchers: Shubham Choudhary, Paul Masset

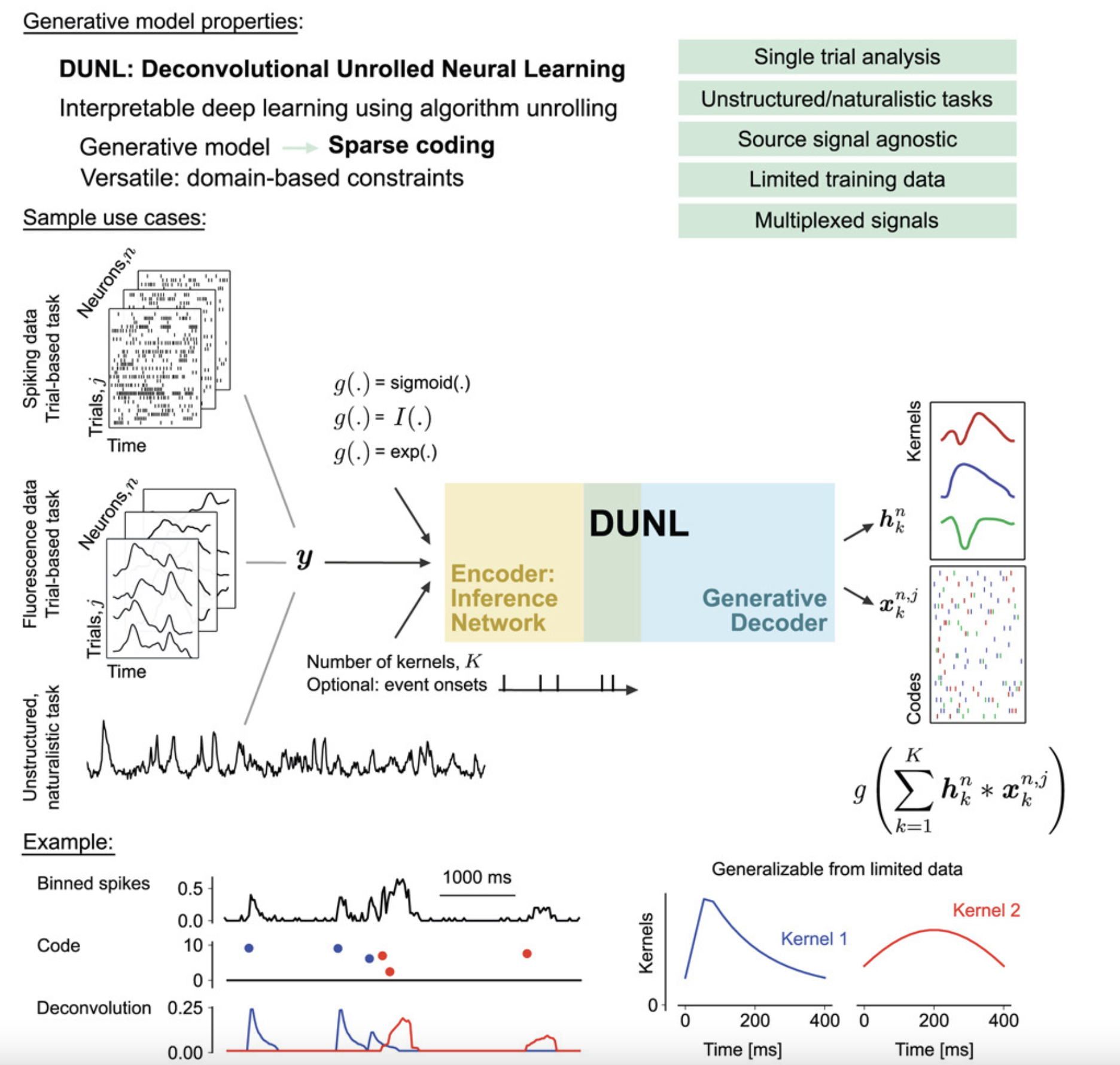

Interpretable Deep Learning for Deconvolutional Analysis of Neural Signals

The widespread adoption of deep learning to model neural activity often relies on "black-box" approaches that lack an interpretable connection between neural activity and network parameters. This project proposes using algorithm unrolling, a method for interpretable deep learning, to design the architecture of sparse deconvolutional neural networks. The resulting method, deconvolutional unrolled neural learning (DUNL), obtains a direct interpretation of network weights through a generative model and deconvolves neural data into a sparse set of interpretable, local components. DUNL is applied to uncover multiplexed salience and reward prediction error signals from midbrain dopamine neurons, perform simultaneous event detection and characterization in somatosensory thalamus recordings, and characterize the heterogeneity of neural responses in piriform cortex and striatum during naturalistic experiments.

Relevant papers

[1] Tolooshams B.†, Matias S.†, Wu H., Murthy V. N., Masset P., Ba D. "Interpretable deep learning for deconvolutional analysis of neural signals", Cell Reports Methods, 2023.

Main researchers: Bahareh Tolooshams, Paul Masset

Learning Sparse Representations with Matching Pursuit

Sparse autoencoders have emerged as a common tool to extract sparse representations for mechanistic interpretability. This project proposes to replace their single-layer encoder with an unrolled version of Matching Pursuit, a greedy iterative algorithm for sparse approximation. With this new residual encoder architecture, we are able to extract hierarchical sparse representations in highly coherent dictionaries, which current sparse autoencoders fail to capture due to their shallow encoder. By connecting classical optimization algorithms to modern deep learning architectures through unrolling, this work demonstrates how principled algorithmic design can lead to more interpretable and effective neural network components while maintaining theoretical guarantees from the optimization literature.

Relevant papers

[1] Costa V., Fel T., Lubana E. S., Tolooshams B., Ba D. "From flat to hierarchical: Extracting

sparse

representations with Matching Pursuit", Neural Information Processing Systems, 2025.

[2] Costa V., Fel T., Lubana E. S., Tolooshams B., Ba D. "Evaluating sparse autoencoders: From shallow design to matching pursuit", ICML Workshop on Methods and Opportunities at Small Scale (MOSS), 2025.

Main researchers: Valerie Costa, Thomas Fel, Ekdeep Singh Lubana, Bahareh Tolooshams