NeuroAI — Current Projects

Perception and Neural Representation of Intermittent Odor Stimuli

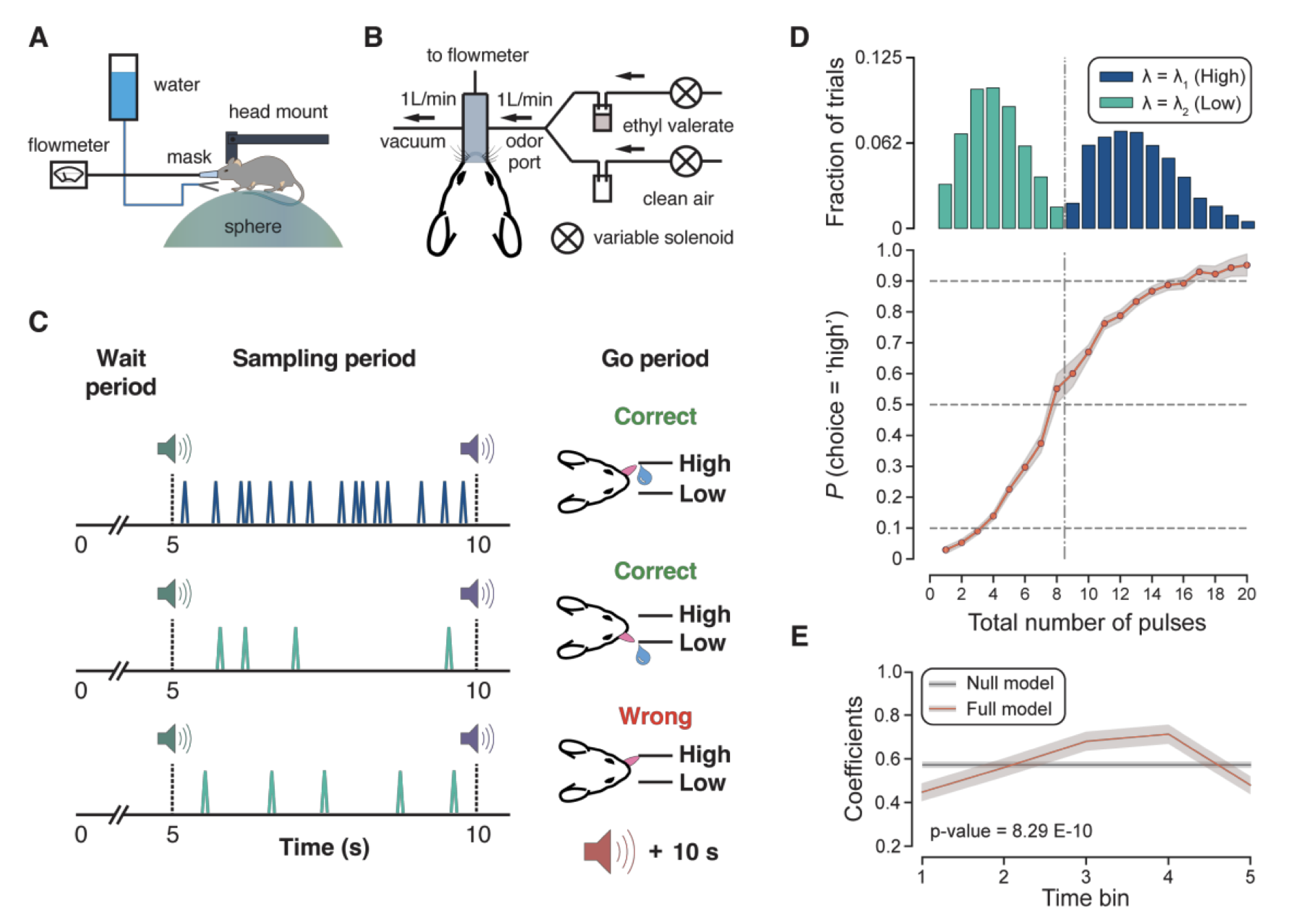

How do animals perceive and represent olfactory stimuli that arrive intermittently, as they naturally do in turbulent environments? This project investigates the neural mechanisms underlying the perception of intermittent odor stimuli in mice. By combining behavioral experiments with neural recordings in the olfactory system, we characterize how the brain encodes and integrates temporally structured sensory input, shedding light on the computational principles that support robust odor perception under naturalistic conditions.

Relevant papers

[1] Boero L.†, Wu H.†, Zak J. D., Masset P., Pashakhanloo F., Jayakumar S., Tolooshams B., Ba D., Murthy V. N. "Perception and neural representation of intermittent odor stimuli in mice", Nature Communications, 2026.

Main researchers: Bahareh Tolooshams

Traveling Waves for Spatial Information Integration

The act of vision is a coordinated activity involving millions of neurons in the visual cortex, which communicate over distances spanning up to centimeters on the cortical surface. How do these neurons perform this coordinated computation and effectively share information over such long distances? This project investigates how traveling waves of neural activity integrate spatial information across neural populations. Using convolutional recurrent neural networks that learn to generate traveling wave patterns, we demonstrate that wave dynamics effectively expand receptive fields for local neurons and enable long-range information communication. Our models outperform standard feed-forward networks on visual semantic segmentation tasks requiring global spatial integration while using fewer parameters, suggesting that wave dynamics offer efficiency and training stability benefits for both biological and artificial neural systems.

Relevant papers

[1] Jacobs M., Budzinski R. C., Muller L., Ba D., Keller T. A. "Traveling waves integrate

spatial

information through time", Cognitive Computational Neuroscience, 2025.

Main researchers: Mozes Jacobs, Andy Keller

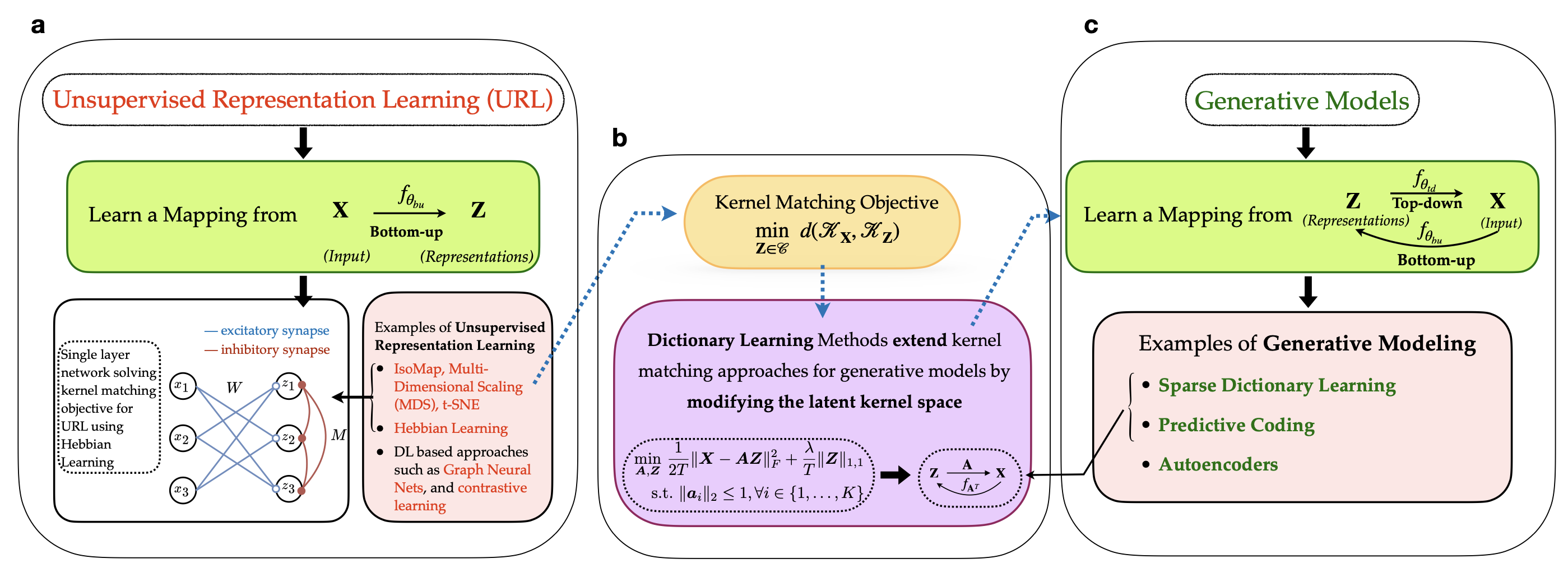

Implicit Generative Modeling by Kernel Similarity Matching

Brains don't just recognize patterns—they also predict what they will see. We propose a self-supervised framework that learns representations by aligning the similarity geometry of inputs with a learned latent space, and in doing so, reveals an implicit generative model that maps latent representations back to sensory data. Our key insight is an equivalence between kernel similarity matching objectives and dictionary learning, which lets us reinterpret representation learning as a bidirectional process: bottom-up encoding from stimuli to latents, coupled with top-down prediction from latents to stimuli. This perspective links modern similarity-based learning to biologically grounded ideas like predictive coding, offering a new route toward interpretable and neurally plausible representation learning.

Relevant papers

[1] Choudhary S., Masset P., Ba D. "Implicit generative modeling by kernel similarity matching", In Press, Neural Computation, 2026. [arxiv]

[2] Choudhary S., Masset P., Ba D. "Self supervised dictionary learning using kernel matching", IEEE International Workshop on Machine Learning for Signal Processing, 2024. [official]

Main researchers: Shubham Choudhary, Paul Masset